The primary driver for the Cloud Exit is “bill shock.” The promise of the cloud was a lower Total Cost of Ownership (TCO) through scalability, but many enterprises found that the “pay-as-you-go” model actually meant “pay-more-as-you-grow.” When egress fees, storage premiums, and management overhead are tallied, the ROI of the public cloud often evaporates for steady-state workloads.

Furthermore, the generative AI boom has fundamentally changed the math. Training and running high-performance models requires massive GPU compute. Renting these chips from cloud providers is prohibitively expensive for many. By investing in their own hardware ecosystem, companies can run their proprietary models with zero latency and without the recurring “compute tax” of the hyperscalers.

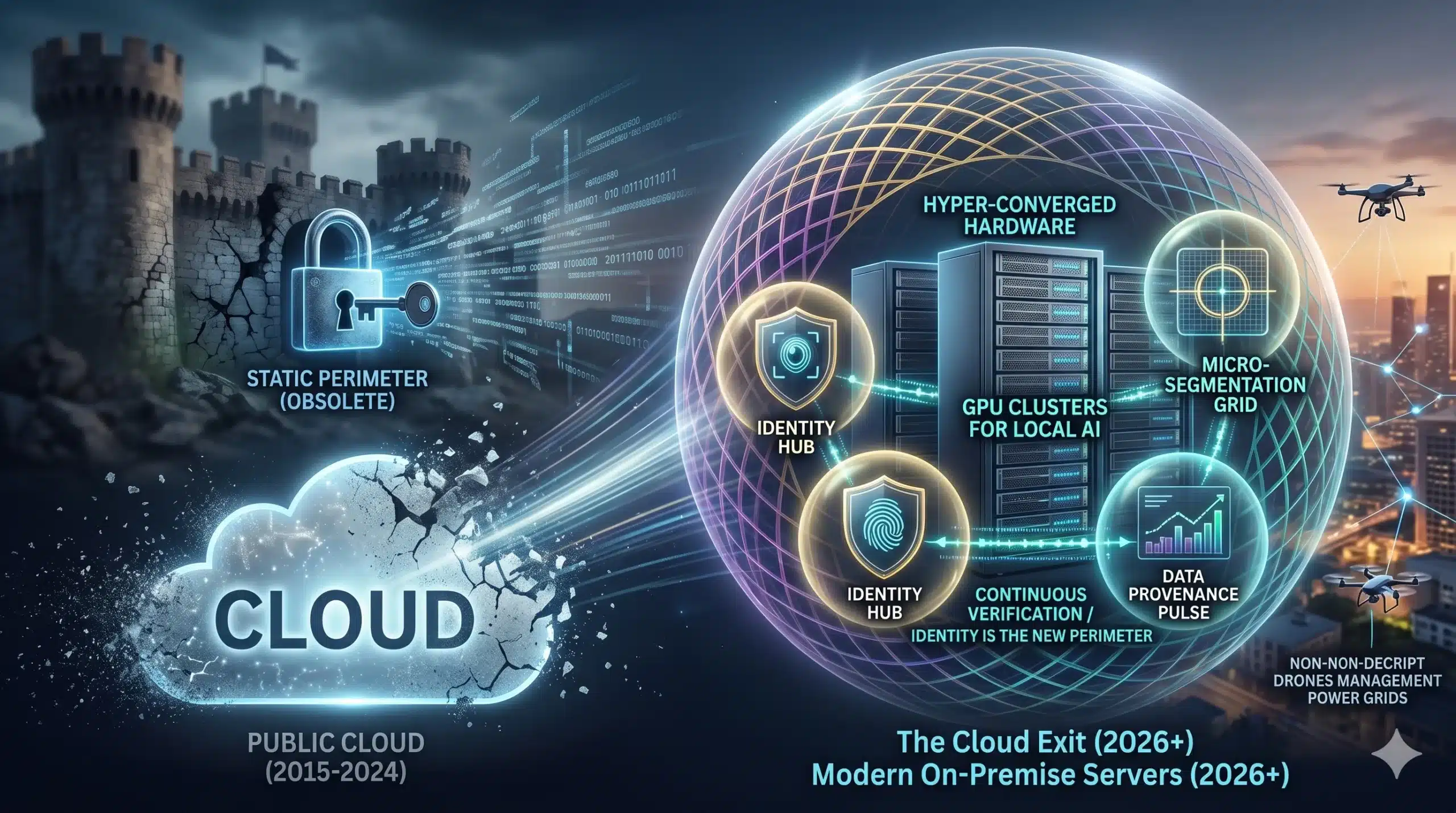

Technical Breakdown: The Modern On-Premise Stack

Today’s on-premise solutions bear little resemblance to the dusty server closets of the early 2000s. They are built on “Cloud-Native” principles that provide the flexibility of the cloud with the control of local hardware.

- Hyper-Converged Infrastructure (HCI): This combines compute, storage, and networking into a single software-defined system, making on-premise hardware as easy to scale as cloud instances.

- Kubernetes and Containerization: By using containers, enterprises can ensure seamless integration between their local servers and the remaining cloud services, allowing for a “Hybrid” model that moves workloads to where they are most cost-effective.

- GPU Clusters for Local AI: High-density server racks equipped with specialized AI accelerators allow companies to keep their sensitive data local while still leveraging state-of-the-art machine learning.

- Advanced “LOM” (Lights-Out Management): Modern hardware allows for 100% remote management, meaning you don’t need a massive on-site IT team to keep the infrastructure running 24/7.

The Infrastructure Paradigm Shift

| Feature | Public Cloud (2015–2024) | Modern On-Premise (2026+) |

| Cost Structure | OPEX (Recurring/Variable) | CAPEX (Initial Investment) |

| Data Control | Shared / Third-Party | Absolute Sovereignty |

| Latency | Network Dependent | Near-Zero (LAN Speeds) |

| Scaling | Instant / Expensive | Planned / High ROI |

Real-World Impact: Sovereignty in Action

The integration of modern on-premise hardware is transforming high-stakes industries. In Financial Services, banks are repatriating their core processing engines to local servers to meet increasingly strict “Digital Sovereignty” laws. By keeping data within their own physical walls, they eliminate the risk of a third-party cloud outage paralyzing their operations and improve their long-term ROI.

For the Digital Entrepreneur, the Cloud Exit looks like “Local-First” development. A game studio like Druvion Studio might use the cloud for global distribution, but keep their heavy rendering and source code on a local high-performance server in Odisha. This ensures that their intellectual property never leaves their control and that their development cycle isn’t throttled by internet bandwidth or cloud seat-licensing costs.

In Manufacturing, companies are using local “Edge-Farms” to manage the sensors and robotic arms on the factory floor. Because the processing happens on-premise, the machines can react in milliseconds to anomalies, ensuring a level of safety and precision that the “laggy” public cloud simply cannot provide.

Challenges & Ethics: The Bottlenecks of Ownership

Moving back to on-premise isn’t without its “bottlenecks.” It requires a shift in mindset and a significant upfront commitment.

- Upfront Capital: Unlike the “swipe-to-start” cloud model, on-premise requires a large initial investment in hardware. For smaller startups, this can be a barrier to scalability.

- The Energy Responsibility: When you own the servers, you own the carbon footprint. Enterprises must now manage their own cooling and power efficiency, leading to the rise of localized GreenOps strategies.

- Talent Scarcity: Managing a modern, hyper-converged data center requires specialized skills. There is currently a “skills gap” as many engineers spent the last decade learning cloud APIs rather than hardware management.

The 3-5 Year Outlook: The Hybrid Equilibrium

By 2029, the “Cloud Exit” will settle into a “Hybrid Equilibrium.” We won’t see the death of the cloud, but rather its demotion to a specialized tool for burst-compute and global delivery. The “brain” of the enterprise will live on-premise, while the “limbs” will extend into the cloud.