Corporate liability has moved from the filing cabinet to the source code. For decades, “compliance” meant checking spreadsheets and ensuring physical safety standards were met. But in 2026, the most significant risks to a brand aren’t found in a warehouse; they are hidden within the “black box” of automated decision-making. When an AI mistakenly rejects a mortgage application, shadow-bans a creator, or discriminates in a hiring loop, “we didn’t know how it worked” is no longer a legal defense—it is a confession of negligence.

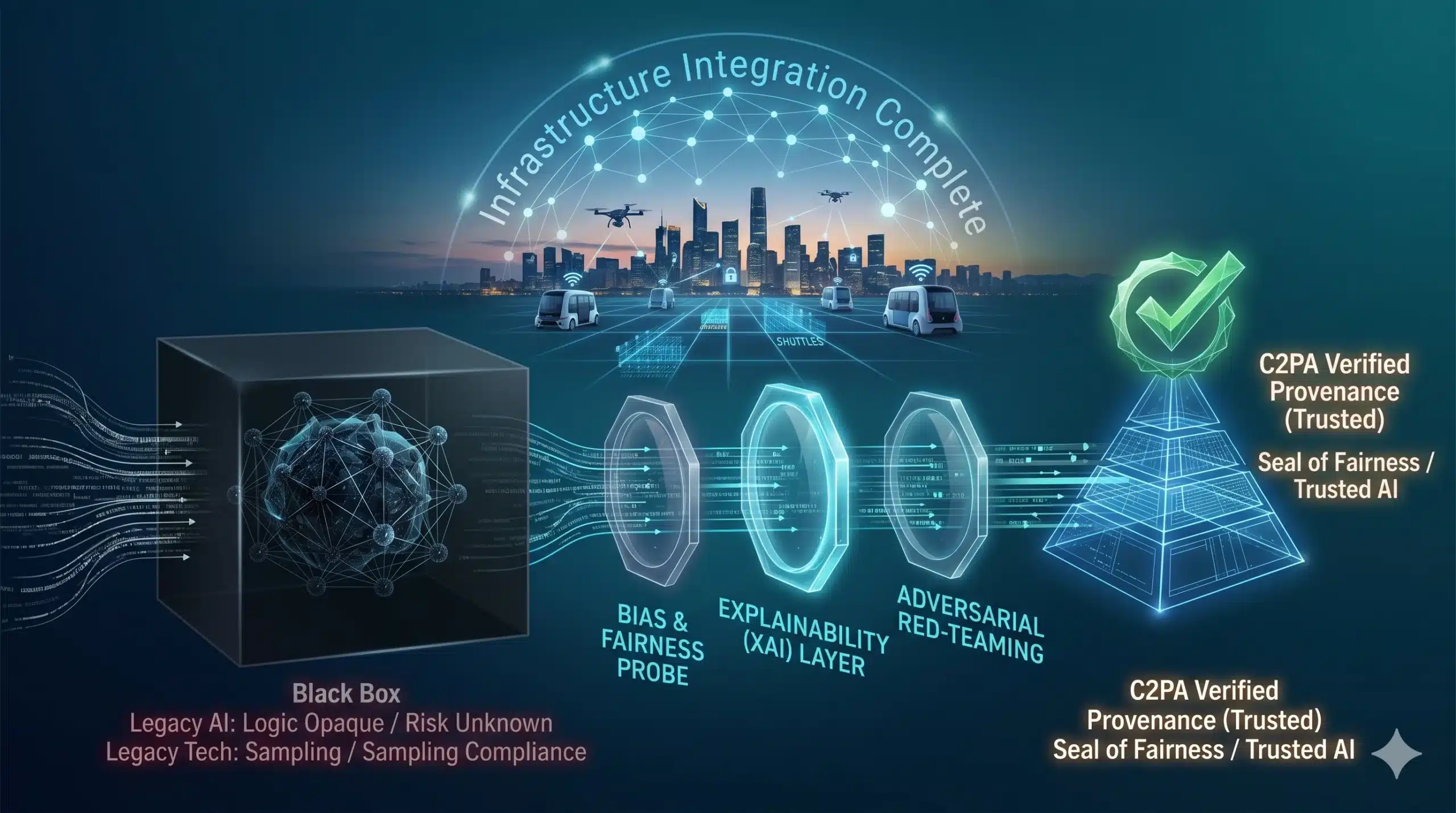

We have entered the era of Algorithmic Auditing. As AI moves from a novelty to the core infrastructure of global commerce, the demand for transparency has birthed a new class of professional oversight. This isn’t just about catching bugs; it’s about the systematic verification of fairness, safety, and explainability. In the high-stakes world of modern enterprise, the auditor’s checklist has been replaced by the neural network probe.

The “Why”: The End of AI Impunity

The shift toward algorithmic auditing is driven by a global regulatory “pincer movement.” From the EU AI Act to localized mandates in North America and Asia, governments are demanding that high-risk AI systems be auditable by design. The economic cost of non-compliance is now a material threat to ROI, with fines for “uncontrolled AI” beginning to mirror the multi-billion dollar penalties seen in the early days of GDPR.

Technologically, the shift is forced by the complexity of the modern ecosystem. As companies integrate third-party models into their proprietary workflows, they inherit “algorithmic debt.” If a vendor’s model contains a hidden bias, the company using it is legally responsible for the fallout. Auditing has become the “stress test” for the digital age, ensuring that the integration of AI doesn’t create a systemic collapse of corporate integrity.

Technical Breakdown: The Auditor’s Toolkit

Algorithmic auditing is a multi-dimensional process that looks beyond simple output to understand the “reasoning” behind the machine.

- Bias and Fairness Testing: Auditors use “synthetic cohorts” to stress-test how an algorithm treats protected groups. By isolating variables like race, gender, or age, they can statistically prove if a model is favoring one group over another.

- Explainability (XAI) Probing: Using techniques like SHAP (SHapley Additive exPlanations), auditors identify which specific data points had the most influence on a final decision.

- Adversarial Robustness: The “red-teaming” of an algorithm. Auditors attempt to “trick” the model with edge-case data to see if it triggers a failure or produces unsafe outputs.

- Data Provenance Review: Verifying the “digital birth certificate” of the training data. This ensures the model wasn’t built on stolen IP, biased historical records, or non-consensual personal information.

The Compliance Evolution

| Feature | Legacy Compliance (Old Tech) | Algorithmic Auditing (2026+) |

| Audit Subject | Financials & Physical Assets | Neural Networks & Logic Weights |

| Verification | Periodic / Sampling | Continuous / Automated |

| Primary Goal | Fraud Detection | Fairness & Safety Verification |

| Human Role | Spreadsheet Reviewer | Data Ethicist / AI Architect |

Real-World Impact: Trust as a Competitive Edge

The integration of auditing into the corporate lifecycle is turning “trust” into a measurable asset. In Fintech, an audited loan algorithm allows a bank to expand its lending pool with confidence, knowing the system won’t inadvertently trigger a discriminatory lending lawsuit. This precision directly improves scalability, allowing the bank to automate more of its operations without increasing its legal risk profile.

For a Digital Entrepreneur managing a large content ecosystem, algorithmic auditing acts as a brand shield. By auditing their recommendation engines, they can prove to advertisers that their platform doesn’t promote toxic content or suppress specific voices. This transparency builds long-term value, ensuring that their MozStriker blogs or Druvion Studio game communities remain healthy and monetizable.

In Human Resources, companies are using third-party auditors to verify that their AI-driven “resume screeners” are actually looking for skill sets rather than proxies for socioeconomic status. This ensures the best talent is hired, regardless of where they are—from a tech hub in Bengaluru to a remote workspace in Odisha.

Challenges & Ethics: The Transparency Bottleneck

The move toward total transparency faces significant “bottlenecks” related to proprietary secrets and technical overhead.

- The IP Conflict: Companies often argue that revealing how their algorithm works would expose their “secret sauce” to competitors. Balancing “public safety” with “trade secrets” is the primary legal friction of 2026.

- The Complexity Gap: Modern LLMs have billions of parameters. Auditing them for every possible edge case is computationally expensive and requires a level of expertise that most traditional auditing firms currently lack.

- Inference Costs: Continuous, real-time auditing of high-frequency algorithms (like those used in stock trading) adds a massive overhead to the infrastructure, potentially slowing down system performance.

The 3-5 Year Outlook: The Rise of the AI Notary

By 2029, we will see the emergence of the “AI Notary”—independent, certified firms that provide a “Seal of Fairness” to algorithms, much like a LEED certification for a green building. Consumers will look for this seal before trusting an app with their health data or their finances.