The honeymoon phase with the “chat box” is officially over. For the past few years, we have been captivated by Large Language Models (LLMs) that answer questions, summarize PDFs, and write mediocre poetry. But for the modern enterprise, a tool that simply talks is a tool that requires constant babysitting. The real friction isn’t a lack of information; it’s the “integration tax”—the hours humans spend copying data from an AI chat into a CRM, an email, or a project management board.

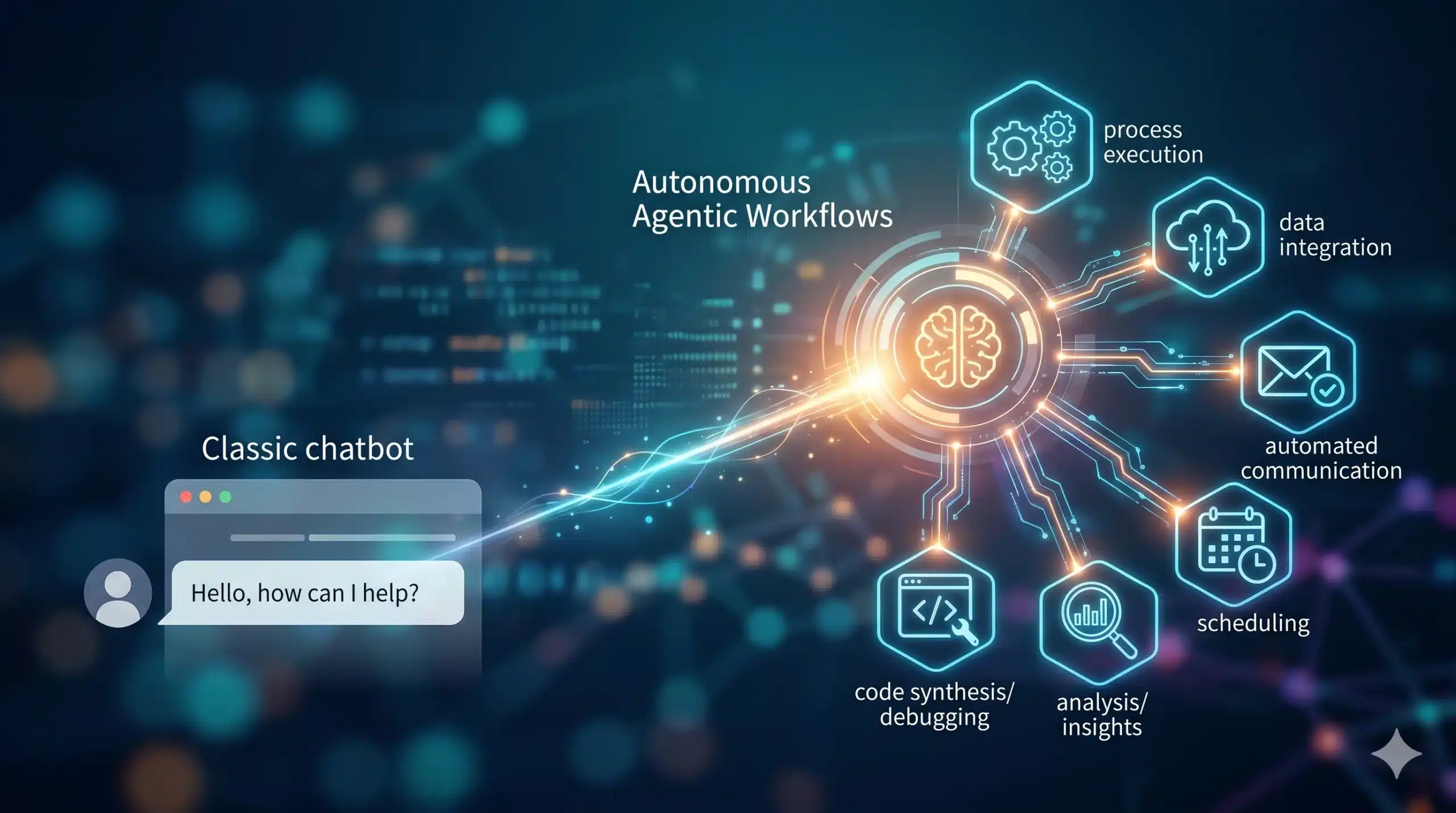

We are now entering the era of Agentic Workflows. Unlike passive chatbots, these autonomous systems don’t just suggest solutions; they execute them. TheBeyond the Chatbot: The Rise of Autonomous Agentic Workflows

y are moving from the passenger seat to the cockpit, fundamentally shifting AI from a conversational interface to an operational engine.

The Shift: Why Conversations Aren’t Enough

The economic driver behind agentic workflows is the pursuit of true scalability. Traditional automation (RPA) was brittle; if a UI button moved three pixels to the left, the bot broke. Conversely, standard generative AI is non-linear but lacks “agency”—it cannot independently correct its own mistakes or traverse multiple software ecosystems without a human in the loop.

In 2026, the “Integration Tax” is the primary bottleneck for ROI. Organizations have realized that having an AI draft an invoice is useless if a human still has to manually verify the vendor, check the budget, and hit ‘Send.’ The shift to autonomous agents is driven by the need to close this “last-mile” execution gap. By allowing AI to reason through a sequence of steps, businesses are moving away from task-based AI toward outcome-oriented AI.

Technical Breakdown: How Agentic Systems Work

At its core, an agentic workflow is a loop of reasoning, tool-use, and self-correction. It isn’t a single prompt; it is a multi-step orchestration.

- Iterative Reasoning: The agent breaks a complex goal (e.g., “Onboard this new client”) into sub-tasks using frameworks like Chain-of-Thought (CoT) or ReAct.

- Tool Engagement: Agents are equipped with APIs and “perception” layers that allow them to read databases, browse the web, and interact with third-party SaaS tools.

- Memory Management: Short-term memory tracks the current task state, while long-term memory (often via Vector Databases) recalls past interactions and company-specific preferences.

- Self-Correction (Reflection): The agent reviews its own output. If a code execution fails or a data point looks like an outlier, the agent re-runs the logic before ever showing the result to a human.

The Evolution of Automation

| Feature | Legacy Chatbots (2023) | Agentic Workflows (2026) |

| Primary Interaction | Prompt/Response | Goal/Outcome |

| Tool Use | None (Text only) | Full API & Browser Integration |

| Error Handling | Hallucinates or stops | Self-corrects and iterates |

| Human Role | Constant Supervision | Strategic Oversight |

Real-World Impact: From Operations to Innovation

The implications of this shift are most visible in complex, multi-layered environments. In Supply Chain Management, an agentic workflow doesn’t just flag a shipping delay; it automatically scans for alternative vendors, calculates the ROI of expedited shipping versus waiting, drafts the updated purchase orders, and notifies the warehouse manager.

In Software Development, we are seeing the rise of “Agentic IDEs.” Instead of a developer asking for a function snippet, the agent identifies a bug in the repository, writes a fix, runs the unit tests, and submits a pull request for review. This doesn’t replace the engineer; it elevates them to an architect, focusing on high-level design while the agent handles the infrastructure of the “grunt work.”

For the consumer, this looks like a “Universal Personal Assistant.” Imagine telling your phone, “Organize my trip to Tokyo next month,” and the agent manages the flights, syncs with your calendar, books restaurants based on your dietary history, and updates your budget—all without you opening five different apps.

The Bottlenecks: Challenges and Ethics

The transition to autonomy isn’t without friction. The most significant hurdle is Agency Risk. When you give an AI the “keys” to your bank account or your production database, the cost of an error skyrockets.

- Security & Permissions: Creating a “Least Privilege” model for AI is difficult. How do you ensure an agent doesn’t overreach and access sensitive payroll data while trying to schedule a meeting?

- The Hallucination Loop: If an agent makes a mistake in step one, it can spend hours (and significant compute cost) iterating on a flawed premise.

- The Energy Paradox: Running continuous reasoning loops is significantly more resource-intensive than a single chat response, making GreenOps and inference efficiency critical priorities.

There is also the looming question of accountability. If an autonomous agent accidentally violates a GDPR regulation or triggers a flash-crash in a trading environment, the legal framework for “who is at fault” remains dangerously thin.

The 3-Year Outlook: The Invisible Engine

By 2029, the term “AI Agent” will likely disappear, simply because it will be the default state of all software. We will look back at the era of typing prompts into a blank box as a quaint, transitional phase—much like we now view the early days of “dialing” into the internet.