For years, artificial intelligence has been a ghost in the machine—brilliant at rearranging pixels and predicting the next word in a sentence, but utterly helpless when asked to fold a laundry basket or tighten a bolt. We have built digital brains that can pass the Bar Exam, yet they remain trapped behind glass screens, disconnected from the messy, unpredictable physical world. This “spatial gap” has long been the ceiling for the AI revolution.

We are finally shattering that glass. Physical AI represents the transition of Large Language Models (LLMs) from digital advisors to embodied actors. By merging the reasoning capabilities of generative AI with the kinetic potential of robotics, we are moving toward a reality where machines don’t just tell us how to solve a problem—they pick up the tools and do it themselves.

The “Why”: Why Now?

The push for Physical AI is driven by a massive labor shortage in manual sectors and a simultaneous collapse in the cost of robotic hardware. Historically, robots were “hard-coded”—programmed with rigid, if-then logic that failed the moment an object was slightly out of place. In a dynamic warehouse or a home kitchen, these legacy systems were functionally blind.

Technologically, the shift is enabled by “World Models.” Instead of just learning language, AI is now being trained on vast amounts of video and tactile sensor data, allowing it to understand the laws of physics, gravity, and spatial relationships. For businesses, the ROI of a robot that can “reason” its way through a cluttered room is exponentially higher than a stationary arm that only performs one repetitive task. We are moving from specialized automation to general-purpose embodiment.

Technical Breakdown: The Architecture of Embodiment

Physical AI isn’t just a chatbot plugged into a motor. It is a sophisticated ecosystem of hardware and software that mimics human sensory-motor loops.

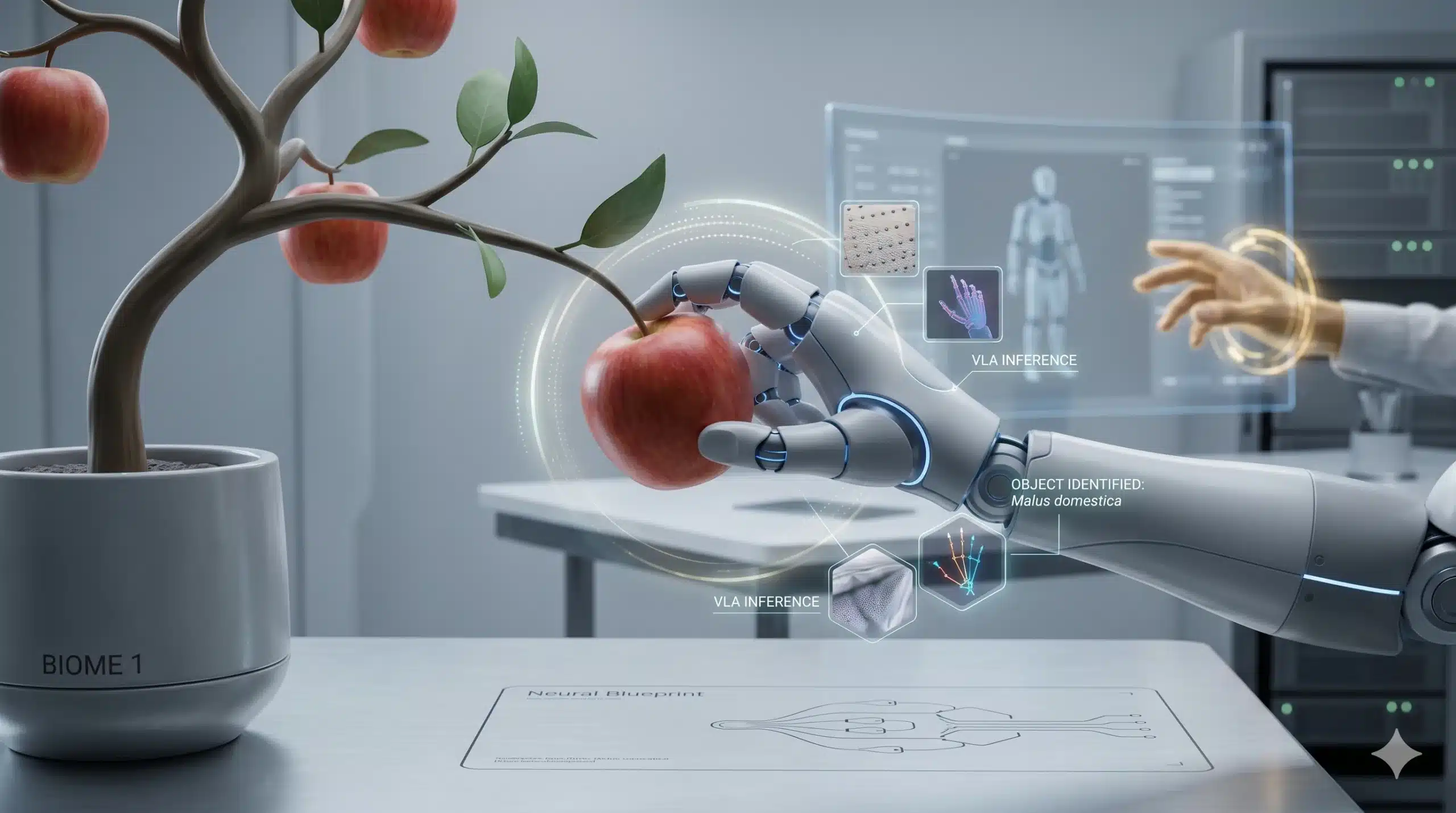

- Vision-Language-Action (VLA) Models: These are the “brains” of Physical AI. They don’t just process text; they process visual streams and translate them into motor commands (e.g., “Move arm 3cm left to avoid the glass”).

- Edge Inference: To interact with the world in real-time, the AI cannot wait for a round-trip to a cloud server. High-performance infrastructure at the “edge” allows the robot to make split-second decisions locally.

- Tactile Sensing: Modern actuators are now equipped with “electronic skin” that provides haptic feedback, allowing the AI to feel the difference between a delicate egg and a heavy wrench.

- Sim-to-Real Transfer: Using high-fidelity digital twins, AI agents “practice” millions of physical movements in a virtual environment before ever touching a real-world object, drastically increasing scalability.

The Robotics Paradigm Shift

| Feature | Legacy Industrial Robots | Physical AI (2026+) |

| Programming | Manual, rigid code | Natural Language / Observational |

| Environment | Controlled (Cages/Lines) | Unstructured (Homes/Offices) |

| Adaptability | Zero (Breaks on error) | High (Self-corrects in real-time) |

| Learning | Static | Continuous (Learning by doing) |

Real-World Impact: From Construction to Care

The integration of Physical AI into the economy will redefine sectors that were previously “un-automatable.” In Construction, imagine a site where robots can interpret a blueprint, navigate a muddy, changing terrain, and lay red bricks with the precision of a master mason while adjusting for wind and uneven ground. This isn’t science fiction; it is the natural evolution of the G+1 housing boom meeting autonomous technology.

In Logistics, Physical AI allows for “fluid warehousing.” Instead of boxes coming to the robot, the robot navigates the warehouse, identifies damaged packaging by sight, and re-stacks pallets based on shifting weight distributions.

For the individual consumer, the impact hits home—literally. We are seeing the rise of “General Purpose Home Assistants.” These aren’t just vacuum cleaners; they are machines capable of loading a dishwasher, organizing a pantry, or even assisting elderly users with mobility. The AI understands the intent (“Help me clean up”) and executes the physical sequence across diverse, unmapped rooms.

Challenges & Ethics: The Friction of Reality

Bringing AI into the physical realm introduces “bottlenecks” that a digital chatbot never had to face.

- Physical Safety: A hallucination in a chatbot results in a wrong fact; a hallucination in Physical AI can result in property damage or human injury. Developing “Safe Latency” is the industry’s biggest hurdle.

- Energy Density: Reasoning is power-hungry. Running a VLA model while simultaneously powering high-torque motors requires battery technology that is currently struggling to keep up with the software’s demands.

- Privacy and Surveillance: For a robot to function in a home or office, it must constantly record its surroundings. Ensuring this data stays local and isn’t used for invasive tracking is a massive integration challenge for legal frameworks.

The 3-5 Year Outlook: The Invisible Labor Force

By 2029, we will stop talking about “robots” as a separate category and start viewing them as the physical interface of the AI we already use. The office “AI assistant” will have a physical presence to handle filing or beverage service; the digital entrepreneur’s “logistics agent” will have a physical arm in a fulfillment center.