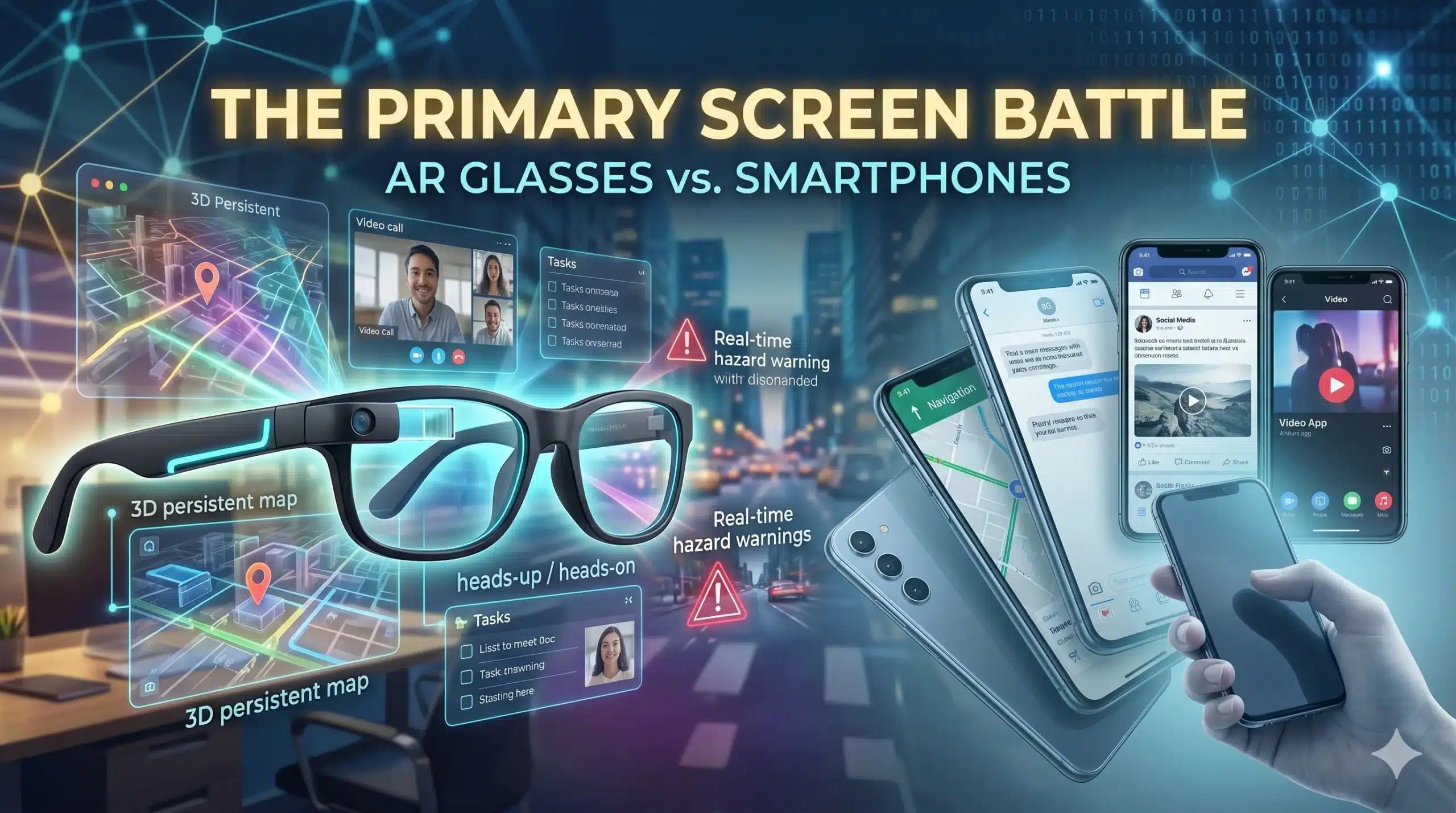

The glowing rectangle in your pocket is starting to feel like a tether. For nearly two decades, the smartphone has been the undisputed sun at the center of our digital solar system, but its dominance is finally being challenged by a fundamental shift in how we perceive data. We have reached “peak glass”—a point where looking down at a hand-held screen feels increasingly unnatural in a world that demands our heads-up attention.

The transition to Augmented Reality (AR) Glasses isn’t just about a new gadget; it’s about the death of the “frame.” We are moving from a world where we look at our digital lives to one where we live inside them. As spatial computing matures, the question is no longer if the smartphone will be replaced, but how quickly the “primary screen” will migrate from our palms to our eyes.

The “Why”: The Collapse of the Hand-Held Interface

The economic and psychological drive for AR is rooted in the friction of the “switch.” Every time you look down at a phone to check a notification, you disconnect from your physical environment. This “context switching” is a productivity killer. For enterprises, the ROI of keeping a worker’s hands free while providing real-time data overlays is staggering.

Technologically, the smartphone has hit a plateau. Incremental updates to camera megapixels or processor speeds no longer trigger the massive upgrade cycles of the past. Meanwhile, the miniaturization of optics and the rise of 5G/6G infrastructure have made it possible to offload heavy processing to the cloud or a wearable puck. We are shifting from a mobile-first ecosystem to a spatial-first one because information is simply more valuable when it is contextual and persistent in our line of sight.

Technical Breakdown: Making Digital Light “Real”

Modern AR glasses are a marvel of optical engineering, relying on a sophisticated balance of weight, heat management, and transparency.

- Waveguide Optics: These are the “lenses” that steer light from a tiny projector into your eye. They use microscopic gratings to reflect digital images onto a transparent surface, allowing pixels to coexist with the real world.

- Spatial Mapping (SLAM): Simultaneous Localization and Mapping allows the glasses to “understand” the room. This ensures that a digital window stayed “pinned” to your physical wall even as you walk around it.

- Eye and Gesture Tracking: In the absence of a mouse or touch screen, your gaze is the cursor and your fingers are the buttons. Advanced infrared sensors track where you are looking with millisecond precision.

- Distributed Compute: To maintain a lightweight form factor, many glasses use “split processing,” where the glasses handle the sensors while a nearby device (or the cloud) handles the heavy rendering.

The Screen Paradigm Shift

| Feature | Smartphones (Legacy) | AR Glasses (2026+) |

| Interaction | Touch / Heads-down | Gesture & Gaze / Heads-up |

| Display | Fixed 6-inch Frame | Infinite Spatial Canvas |

| Context | Abstract (Apps) | Grounded (Overlays) |

| Social Presence | Distracting / Isolating | Integrated / Collaborative |

Real-World Impact: The Death of the “Display”

The integration of AR into daily life will turn every surface into a potential screen. For a Digital Entrepreneur, this means their workstation is no longer limited by the size of their laptop. They can have a dozen virtual monitors floating in their home office in Odisha, syncing live AdX revenue streams alongside a 3D model of a zombie survival game level.

In the Automotive sector, the smartphone-based GPS becomes obsolete. Instead of glancing at a mount on the dashboard, a Suzuki rider sees a glowing blue line projected directly onto the road through their visor, highlighting hazards and turn-by-turn directions in their natural field of vision.

Even in Construction, the impact is tactile. A crew building a G+1 residential structure can “see” the plumbing and electrical conduits behind the red brick walls before they are even installed, reducing errors and improving scalability by ensuring the “digital twin” matches the physical reality perfectly.

Challenges & Ethics: The Privacy and Battery Bottlenecks

The road to the “post-smartphone” world is paved with significant technical and social “bottlenecks.”

- The Privacy Paradox: For AR to work, the glasses must “see” everything you see. This creates a massive data governance challenge. How do we prevent glasses from recording bystanders without consent?

- Thermal and Power Limits: Pushing high-resolution pixels into a pair of lightweight frames generates heat. Current battery life for “all-day” AR is still a struggle, often requiring a tethered power pack.

- Social Etiquette: Just as “Glassmorphism” faced pushback a decade ago, the social acceptance of wearing cameras on your face remains a hurdle. People need to know when they are being recorded and when the wearer is “present.”

The 3-5 Year Outlook: The Hybrid Era

By 2029, we won’t see the total disappearance of the smartphone, but we will see its demotion. It will transition into a “pocket server”—a headless brick of silicon that provides the processing power and 5G connectivity for the glasses on your face.