The “Swiss Army Knife” approach to artificial intelligence is hitting a wall of diminishing returns. While massive, general-purpose Large Language Models (LLMs) like GPT-4 or Gemini have dazzled the public with their ability to write everything from bedtime stories to Python scripts, the enterprise reality is proving much grittier. For a doctor, a general model that knows “a little bit about everything” is a liability; for a legal firm, a model that might prioritize poetic flow over case law precision is a danger.

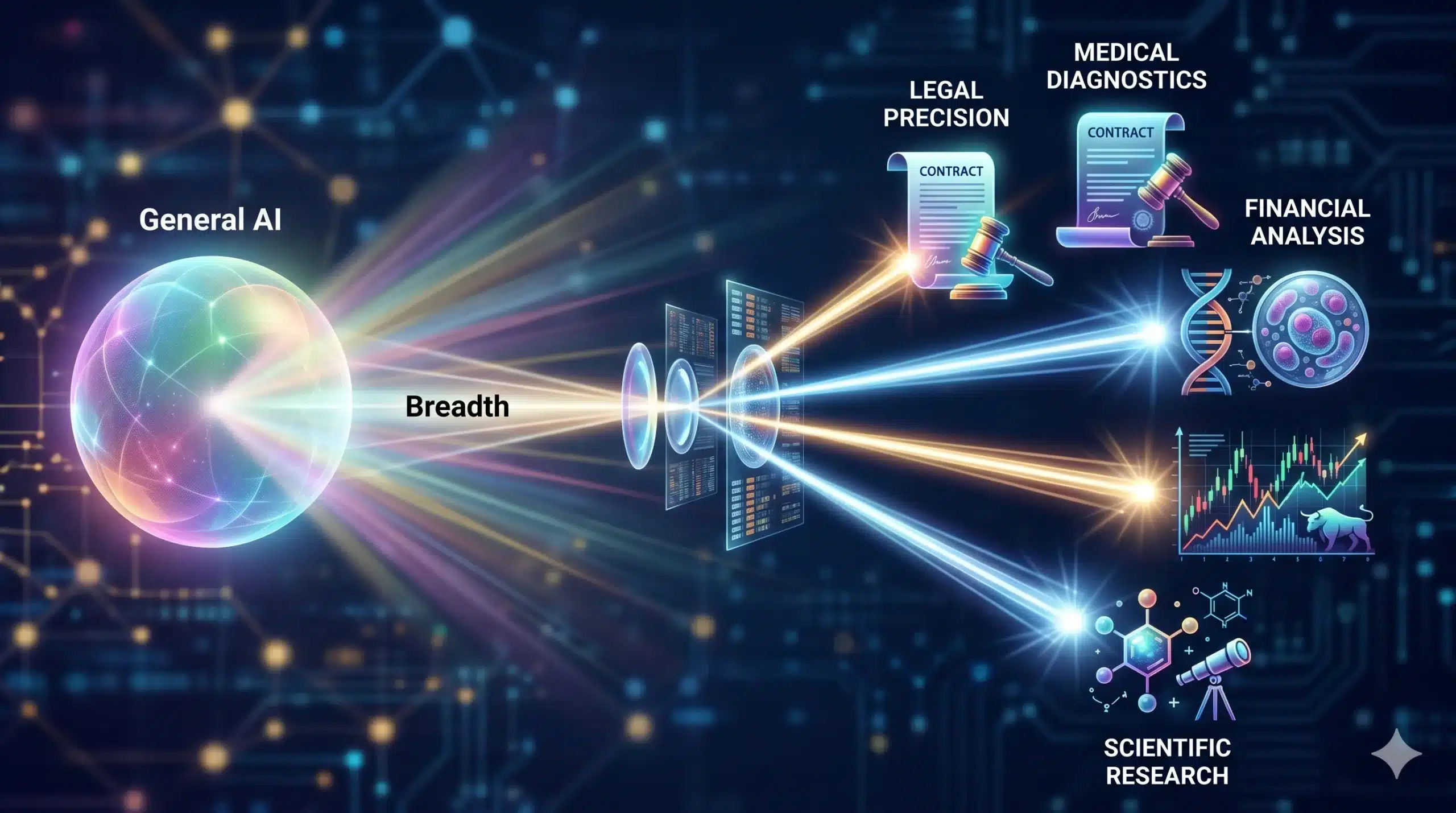

We are witnessing the “Great Specialization.” As organizations look for a clear path to ROI, the focus has shifted from the breadth of a model to its depth. This is the era of Domain-Specific Models (DSMs)—leaner, faster, and hyper-targeted AI trained on the specialized vernacular of specific industries. In the race for enterprise efficiency, the specialized scalpel is officially outperforming the general-purpose sledgehammer.

The “Why”: The Collapse of Generalist Efficiency

The shift toward DSMs is driven by a simple economic reality: general-purpose models are becoming too expensive and too unpredictable for mission-critical tasks. When an LLM is trained on the entire public internet, it inherits the internet’s noise. It struggles with specialized jargon, hallucinating answers where precise terminology is required. For businesses, “close enough” is an unacceptable metric when dealing with medical diagnostics, high-frequency trading, or structural engineering.

Technologically, we have reached the point of “saturation” with model size. Adding another trillion parameters to a general model offers marginal improvements in logic but exponential increases in infrastructure costs and latency. DSMs solve this by focusing “intelligence” where it matters. By narrowing the scope, developers can achieve higher accuracy with significantly smaller models, leading to better scalability and reduced “inference tax.”

Technical Breakdown: How DSMs Achieve Precision

DSMs don’t just “know” more about a subject; they are architected to understand the unique data relationships of a specific field.

- Curated Data Sets: Unlike general models that scrape the web, DSMs are trained on high-quality, verified textbooks, proprietary research, legal filings, or technical manuals.

- Targeted Fine-Tuning: These models often use techniques like PEFT (Parameter-Efficient Fine-Tuning) or LoRA (Low-Rank Adaptation) to layer specialized knowledge onto a foundational model without bloating the ecosystem.

- Reduced Hallucination via RAG: DSMs are frequently paired with Retrieval-Augmented Generation (RAG) tied to private, domain-specific databases. This ensures the AI cites “ground truth” rather than predicting the next most likely word.

- Lower Latency: Because they are smaller, DSMs can run on “edge” devices or localized servers, ensuring that the integration into real-time workflows—like robotic surgery or live stock trading—is instantaneous.

The Specialization Spectrum

| Feature | General Purpose LLMs | Domain-Specific Models (DSMs) |

| Knowledge Base | Everything (Breadth) | Deep Industry Knowledge (Depth) |

| Accuracy | High for general, Low for niche | Elite for niche, Low for general |

| Operational Cost | High (Massive Compute) | Lower (Optimized/Smaller) |

| Primary Risk | Generic Hallucinations | Over-specialization (Tunnel vision) |

Real-World Impact: From Law to the Laboratory

The impact of DSM specialization is most visible in industries where the cost of error is high. In Legal Tech, a DSM trained on centuries of case law can identify precedents and subtle contract contradictions that a general model would overlook. It doesn’t just “write a contract”; it ensures the contract is compliant with specific regional statutes.

In Game Development, a developer focusing on “hybrid-casual” survival runners can use a DSM trained specifically on Unity physics and Synty asset logic. Instead of getting a generic “how-to” on C#, they receive code snippets that are pre-optimized for their specific mobile infrastructure, leading to a faster launch from concept to the Google Play Store.

In Healthcare, DSMs like BioGPT are outperforming generalist models in analyzing complex biochemical interactions. By understanding the “language” of proteins and molecular structures, these models are cutting the drug discovery phase from years to months, providing a tangible ROI that justifies the initial training costs.

Challenges & Ethics: The Privacy and Purity Bottleneck

Specialization brings its own set of “bottlenecks,” primarily concerning data governance.

- Data Scarcity: To build a truly elite DSM, you need high-quality data. In many fields, this data is proprietary or protected by strict privacy laws (like HIPAA or GDPR), making it difficult to source training sets.

- The “Eco-System” Silo: If every department in a company uses a different DSM, the risk of data silos increases. Ensuring seamless integration between a “Marketing DSM” and a “Legal DSM” requires robust cross-functional infrastructure.

- Algorithmic Bias: If a DSM is trained on a narrow set of historical data, it may reinforce industry biases. For instance, a “Hiring DSM” trained on a tech firm’s past 10 years of resumes might inadvertently favor certain demographics if the training data wasn’t properly sanitized.

The 5-Year Outlook: The Rise of Personal Micro-Models

Over the next three to five years, the “One Model to Rule Them All” philosophy will be replaced by a “Federated Model” approach. We will see the emergence of Micro-DSMs—AI that is so specialized it lives on a single device and understands only the specific tasks of that user.